The latest Internet trend that has people talking, is the creation of art through various artificial intelligence programs. In order to create artistic renditions of the users, these programs ask users to upload photos into the system. While it appears like a fun fad, in reality, it causes harm. The intent of this article is to bring awareness to the dangers of using A.I. art. If you have already taken part in the trend, we hope that this editorial will provide you with the information you need the next time you consider testing out A.I. art.

What is A.I. Art?

One of the popular programs that people are using is Lensa AI. This system uses a Stable Diffusion model to process the uploaded photos and generate portraits of a digital art style. A common use of Stable Diffusion is to create images based on text descriptions. In the example below, the user uploaded an image (left-hand side) into an A.I. system and included a text prompt to generate a more detailed result (right-hand side).

Although it seems fun to play around with, these systems data mine artwork without consent from the original artists. As a matter of fact, some sites advertise the art styles of the creators they are trying to imitate. Speaking frankly, it’s very bold of them to pull artwork, especially copyrighted artwork, and include the names of the artists they are taking it from.

Is A.I. Art Legal?

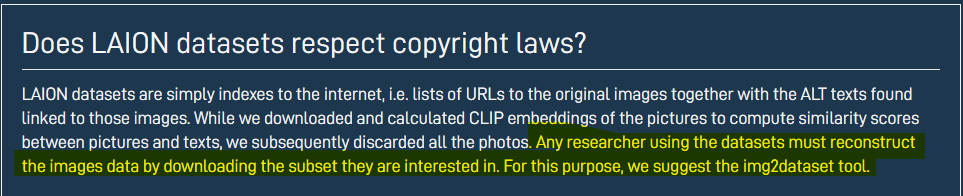

Stable Diffusion can bypass copyright laws because the programs are trained on open-source data from a non-profit company called LAION. Basically, LAION’s datasets include links to Internet images, but it doesn’t store the actual pictures. However, the company’s FAQ states that any person with the appropriate tools can reconstruct the images from the links provided by LAION.

For example, A.I. artist Lapine shockingly found photos of her face from a medical exam she did in 2013. Shown in her tweet below, she had to sign a consent form for pictures to be collected solely for medical reasons. Somehow her medical images found its way online and became part of LAION’s dataset.

Unfortunately, Lapine would have a slim chance of getting LAION to delete the link to her image. According to LAION’s FAQ, if someone sees their name on LAION’s systems, the corresponding image has to match the person’s name (and vice-versa) for it to fall under personal data. Don’t you just love loopholes?

Read the Fine Print

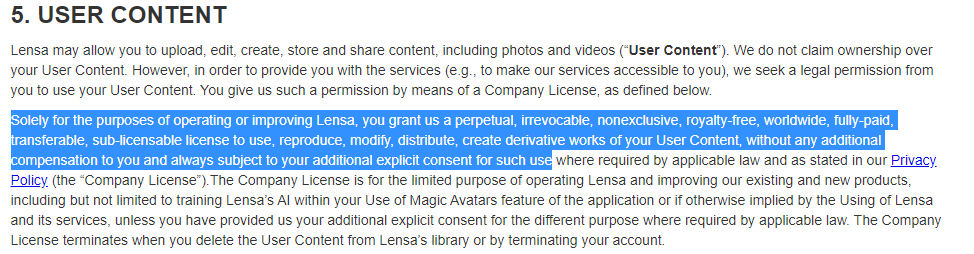

In Lapine’s case, her images still ended up online even though her consent form stated the pictures would be used for medical purposes only. For those who willingly engage in A.I. art, the terms and conditions they’ve agreed to are very worrisome.

When accessing different sites and apps, it’s muscle memory to skip over the fine print and just “Accept.” Under Lensa AI’s terms and conditions, it states that any photos on their system can be reproduced and redistributed commercially worldwide on different sites without any compensation to the user. This is scary to think about in a world where deep-fakes exist.

When discussing another A.I. system called Dreambooth, Abhishek Gupta, founder, principal researcher and director of the Montreal AI Ethics Institute said:

It’s maybe a leap forward in terms of the potential negative impacts that are going to arise, because it’s now allowed outputs that are a lot more convincing. They’re a lot easier to produce. They can be produced at scale”

-Abhishek Gupta, founder, principal researcher and director of the Montreal AI Ethics Institute

What’s even more terrifying to think about is the fact that anyone can upload any person’s images into these A.I. systems; it doesn’t have to be their own. If you have enough pictures of yourself online, someone can input them into the program and your likeness will be used for who knows what. For now, it’s easy to distinguish between A.I. art and reality, but technology advances fairly quickly and it soon may be harder to separate the two.

The Dilemma Surrounding A.I. Art

In spite of what has been discussed so far, A.I. art is something new and revolutionary. It can produce high-quality images relatively quickly at a low cost with just some samples and a line of text input. It can also be a helpful tool for those artistically challenged to take advantage of and produce art. These are fair arguments in favor of A.I. art.

However, these A.I. recreations are hurting the artists who inspired the images. In a Twitter thread, Jenny Yokobori, a voice actress, shared her concerns regarding A.I. art reproductions. To summarize her thoughts, she mentions trying out an A.I. art program, but instantly regretted it because she could see how it resembled the thousands of hours of work done by real artists.

In an article by Jack Doyle on The Mary Sue, Jack mentions an influential South Korean artist, Kim Jung Gi, who passed away due to a heart attack on October 3, 2022. Just THREE DAYS later, an A.I. art programmer trained a system to imitate Kim Jung Gi’s art style as an “hommage [sic].” Maybe the better way to homage Kim Jung Gi is to share the work he actually created.

Supporting the Real Artists

I’d like to emphasize that if you have engaged with A.I. art, I do not hold it against you. The images these systems are able to create are remarkable so I understand the appeal. Now that you are informed of the harm A.I. art causes, do your best to seek out artists who use an art style that attracts you and support them. Reach out to them to see if they do commissions. And if you can’t afford their work, share it with your community.

Speaking frankly, I personally know artists who struggle to get people to notice their work. The art industry is vast and competitive. Now that A.I. art is out there and in the mix, it makes it even harder for them to stand out and feel unique when competing with a system that can grab images all over the Internet.

For more information regarding A.I art and any arguments for it you might have, check out this thread by PeachiieShop on Twitter. To learn about where Agents of Fandom stands in terms of equality, check out the Agents of Change tab.

1 comment